jQuery has provided easy access to complicated core Javascript solutions in the past and has been shielding us from difficult workarounds for legacy browsers. But times have changed and many of those things can be done as easily using Javascript directly.

jQuery is a fast, small, and feature-rich JavaScript library. It makes interactions with HTML documents easy, and is widely used in web development to add features to web pages and to simplify the process of writing JavaScript. With a combination of versatility and extensibility, jQuery has changed the way that millions of people write JavaScript.

That being said, jQuery is still a popular and widely used library, and there are many valid reasons to continue using it. It is ultimately up to you to decide whether the benefits of using jQuery outweigh the potential drawbacks in your particular situation.

Easily search and compare direct Javascript solutions to jQuery ….

How jQuery does it:

|

1 |

$.getJSON('/my/url', function (data) {}); |

Pure Javascript:

|

1 2 |

const response = await fetch('/my/url'); const data = await response.json(); |

UmbrellaJS is a lightweight JavaScript library that provides a number of utility functions and features for working with DOM elements and handling events. It was designed to be small, fast, and easy to use, and it does not have any dependencies on other libraries.

Some of the features provided by UmbrellaJS include:

UmbrellaJS is a good choice for developers who want a simple, lightweight library for working with DOM elements and handling events. It is especially well-suited for smaller projects or for developers who want to avoid the overhead of larger libraries like jQuery.

Selector demos:

|

1 2 3 4 5 6 7 |

u('ul#demo li') u(document.getElementById('demo')) u(document.getElementsByClassName('demo')) u([ document.getElementById('demo') ]) u( u('ul li') ) u('<a>') u('li', context) |

Documentation

Migrate from jQuery

HTMX (HTML enhanced for asynchronous communication and XML) is a JavaScript library that allows you to add asynchronous communication and other interactive features to your web pages using HTML attributes and elements. It allows you to make AJAX (Asynchronous JavaScript and XML) requests and handle responses directly in your HTML, without the need for writing any JavaScript code.

HTMX works by intercepting events on HTML elements and making asynchronous requests based on the attributes you specify. For example, you can use the hx-get attribute to make a GET request to a specified URL, and use the hx-trigger attribute to specify an event that should trigger the request. You can also use the hx-target attribute to specify an element on the page where the response should be inserted.

Here’s an example of how you might use HTMX to make a GET request and insert the response into a div element:

|

1 2 3 |

<button hx-get="/some/url" hx-target="#target">Load Data</button> <div id="target"></div> |

When the button is clicked, HTMX will make a GET request to /some/url and insert the response into the div element with the id of target.

HTMX is designed to be easy to use and flexible, and it can be used to add a wide range of interactive features to your web pages.

I am currently using HTMX in one of my longterm projects and will be talking about it more in a separate article in the future !

HTMX Reference / Documentation

It is ultimately up to you to decide which approach is best for your particular project. Sometimes a combination is needed ;)

A fullstack developer is a software engineer who has expertise in all layers of a web application’s stack. This includes both the frontend, which is the user-facing part of the application, and the backend, which is the server-side portion of the application.

To become a fullstack developer, one must have a solid understanding of a wide range of technologies. This includes proficiency in at least one programming language, such as JavaScript or Python, as well as knowledge of databases, server infrastructure, and web development frameworks.

One of the key benefits of being a fullstack developer is that they can work on any part of a web application, from the design and user experience to the underlying server-side logic. This means that they can take on a wide range of roles and responsibilities, from designing the user interface to implementing complex business logic.

Fullstack developers are also in high demand, as the skills they possess are highly sought-after in the job market. This is because companies are increasingly looking for engineers who can work on both the frontend and backend of their web applications, rather than hiring separate teams for each layer of the stack.

In addition to their technical skills, fullstack developers must also have strong problem-solving and communication skills. This is because they often work on teams and need to be able to collaborate effectively with other developers, as well as communicate their ideas to non-technical stakeholders.

Overall, being a fullstack developer is a challenging but rewarding career path. It requires a diverse set of skills and the ability to adapt to new technologies, but the rewards include the opportunity to work on a wide range of projects and the satisfaction of seeing your work come to life in the form of a web application.

Facebook sucks …. and now how to solve saving pages connected to a gray account! Might not work for everyone, but it solved it for me.

“A gray account is an account used to log into Facebook that is not associated with a personal profile or account. People used to be able to manage their Pages with gray accounts before we required individuals to have a personal Profile in order to create, manage, or run ads on a Page.” – Facebook Help

Normally it should be easy to transfer a page to a new administration account. Right? RIGHT!

My customers page was on the new profile page layout, that was introduced a while back. So normally you should go to the Page -> Professional Dashboard -> Settings -> Site Access. This would than allow you to assign a new page admin.

This just straight fails for me! I can easily choose a new person , select person and allow full access, confirm with password and than nothing happens. When checking the console, i see a couple of random errors …

Tried with different accounts, different browsers, different OS. Always the same …

After trying everything and almost giving up. I though, well you can still switch back to the old page layout, maybe that works!

And that is what finally worked for me. I was able to assign my customer as a new admin, within Settings -> Site Roles and than switch back to the new page layout!

Again … Facebook sucks! Who is testdriving updates and checking for incoming errors … seems that noone cares. Just leave it to the user, to solve their own problems. Not a single resource, that actually helps. I am sure that there are many, that already lost their pages! Just unbelievable !!!

Happy coding!

A while back a potential customer asked me, if it is possible to restructure a WordPress Multisite setup and WPML with a more simplified and custom url structure?

web.site/de/

web.site/en/

Languages would normally be added like this:

web.site/nl-nl/de/

web.site/nl-nl/en/

The customer wanted it to be restructured / simplified like this:

web.site/de-nl/

web.site/en-nl/

This basically mimics the structure of a single WPML website with custom languages, but with all the benefits of a multisite.

This is nothing that WPML or WordPress Multisite provides out of the box.

I built a prototype setup to make it work.

Not something that I would propose for anyone, as it requires a lot of tweaks for anything that handles dynamic links (plugins, hooks, core systems, page.builder …)

Its doable :)

One thing that needs to be tweaked globally, is the mapping of the new url structure.

So web.site/nl-nl/en/ needs to become web.site/en-nl/

This needs to be handled on the server side, by proxying the original to the new structure.

This can be easily done using Apache or NGINX.

With that web.site/nl-nl/en/ will be proxied to web.site/en-nl/, but any core navigation will not work yet.

This is the fastest solution that I came up with, within the hour I gave myself ;)

There surely are other options, like the core rewrites / restructuring of the core shorturl handling. But these approaches might break things in far more areas.

Using the proxy approach, keeps the core as it is. The solution needs to be as simple as possible, allowing to maintain it in the future :)

Just for the basic setup a couple of hooks are required to make this work, more might be needed depending on the plugins in use.

Here a couple of examples ….

WordPress site_url

|

1 2 3 4 5 6 7 8 9 |

add_filter( 'site_url', 'custom_site_url' ); function custom_site_url( $url ) { if( is_admin() ) // you probably don't want this in admin side return $url; return str_replace( "/nl-nl/en/","/en-nl/", $url); } |

WordPress Nav Links

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 |

add_filter( 'post_link', 'changePermalinks', 10, 3); add_filter( 'page_link', 'changePermalinks', 10, 3); add_filter( 'post_type_link', 'changePermalinks', 10, 3); add_filter( 'category_link', 'changePermalinks', 11, 3); add_filter( 'tag_link', 'changePermalinks', 10, 3); add_filter( 'author_link', 'changePermalinks', 11, 3); add_filter( 'day_link', 'changePermalinks', 11, 3); add_filter( 'month_link','changePermalinks', 11, 3); add_filter( 'year_link', 'changePermalinks', 11, 3); function changePermalinks( $url, $post, $leavename=false ) { $url = str_replace("/nl-nl/en/","/en-nl/", $url); return $url; } |

WPML

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 |

add_filter( 'icl_ls_languages', 'wpml_url_fix'); function wpml_url_fix( $languages ) { global $wpml_url_converter; $abs_home = $wpml_url_converter->get_abs_home(); foreach( $languages as $lang => $element ){ $languages[$lang]['url'] = str_replace( "/nl-nl/en/","/en-nl/", $languages[$lang]['url'] ); } return $languages; } |

Rankmath

|

1 2 3 |

add_filter( 'rank_math/frontend/canonical', function( $canonical ) { return str_replace( "/nl-nl/en/","/en-nl/", $canonical); }); |

This will not cover every angle, but will give you a starting point! I love my puzzles and there always is a viable solution :)

Need something similar … get in touch!

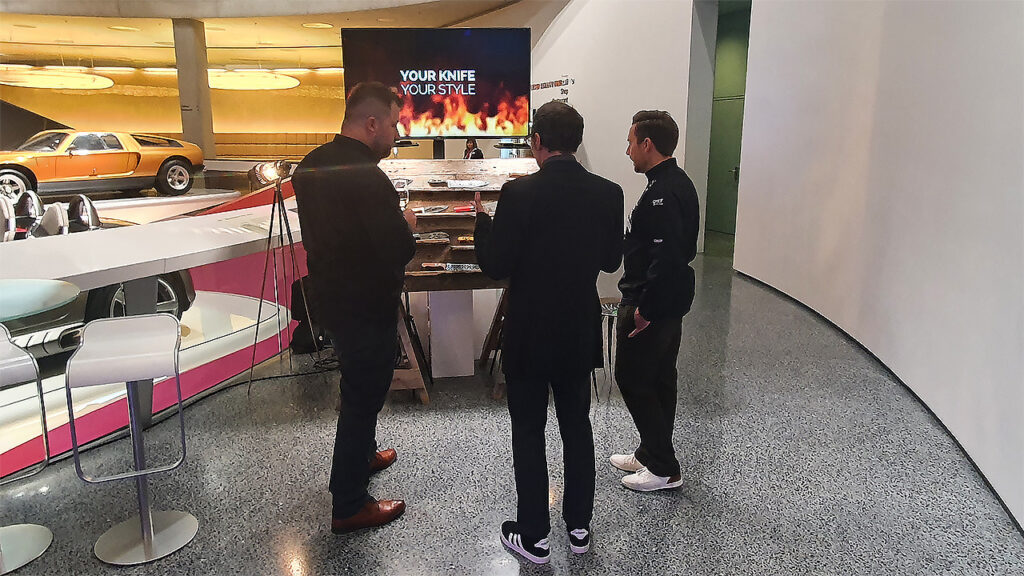

Ich hatte dieses Jahr die Möglichkeit, über meinen Kunden TYPEMYKNIFE®, an der “Nacht der Sterne” in Stuttgart, im Mercedes-Benz Museum, teilzunehmen. Auf der Gala kommen mehr als 800 Gäste aus Gastronomie, Hotellerie, Politik, Kultur und Wirtschaft zusammen.

Es war ein klasse Abend, auf dem nicht nur die Spitzenköche aus Deutschland, der Schweiz, Südtirol und Österreich ausgezeichnet wurden, sondern diese auch Live zeigen konnten was sie so können.

Veranstaltet wird die Party von der Allgemeinen Hotel- und Gastronomie-Zeitung (Ahgz) und Burg Staufeneck / Rolf Straubinger.

Moderatorin des Abends waren die Journalistin und Fernsehköchin Felicitas Then und Rolf Westermann von der ahgz-Chefredaktion.

Informationen zum Award, den Methoden und Siegern findet man hier.

TYPEMYKNIFE® hat Vorort an einem Stand eine kleine Auswahl seiner Küchenmesser, die über den 3D Gravur Konfigurator vorbereitet und graviert wurden, präsentiert. Dadurch hatten Gäste die Möglichkeit, die gravierten Küchenmesser einmal persönlich zu entdecken und die Qualität zu bestaunen.

Die fast 1400 km Rundreise aus dem Norden hat sich gelohnt. Es ist immer schön Kunden mal nicht nur virtuell zu treffen, besonders wenn die Distanz so groß ist. Bei der Entfernung trifft man sich nicht immer mal kurz auf einen Kaffee oder Gin-Tonic :)

Gruß an TYPEMYKNIFE® / Schwäbisch Gmünd / Stuttgart

This is not a tutorial, but more like sharing a nice geeky road-trip ;)

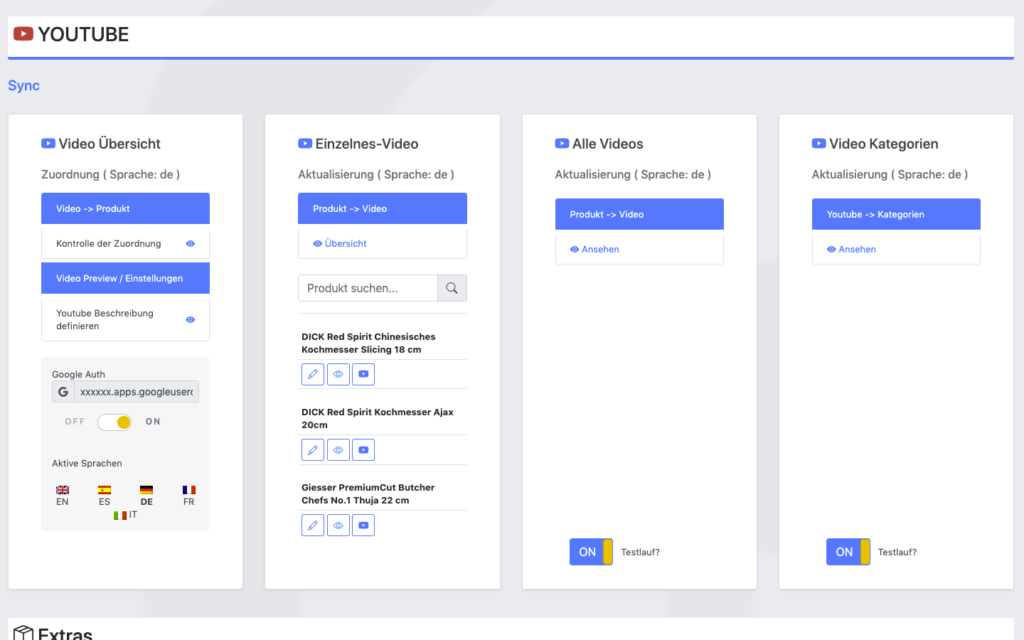

I have a pretty good understanding of the Youtube Data API, as I have actively used it on portalZINE TV in the past, to upload videos and dynamically link them to my local post-types.

For one of my latest customer projects (TYPEMYKNIFE / typemyknife.com), the task was a bit more complicated and the goal was to make it as future-proof as it can be with the Google APIs :)

Prerequisites / References to get you started:

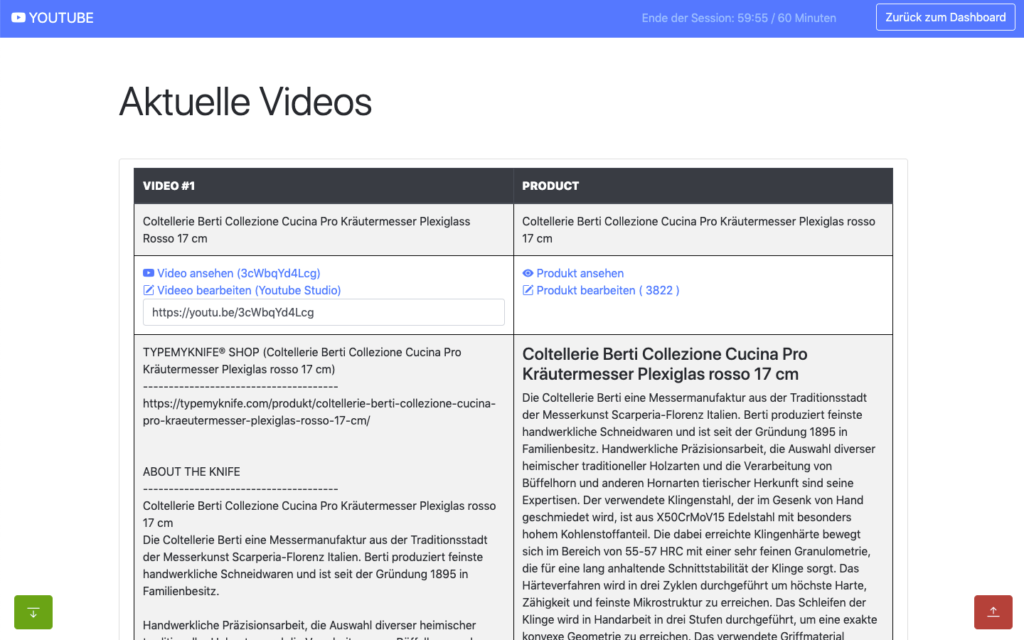

The goal for the setup was to actively synchronize WooCommerce products with linked / attached videos, with their source at Youtube.

As the website is multilingual, WPML integration is critical as well. And as Youtube allows localization of title and description, that can be added into the mix quiet easily in the future ;)

The following product attributes should be mirrored and optimised for Youtube:

The following attributes should be integrated into the description to enrich the Youtube description:

All of these attributes will be collected internally and assigned using a simple template system, which allows the customer to move parts around freely and freely layout the description for Youtube.

The following stats will be collected for review:

Youtube SEO

These are the relevant key aspects, that help to get your videos more views.

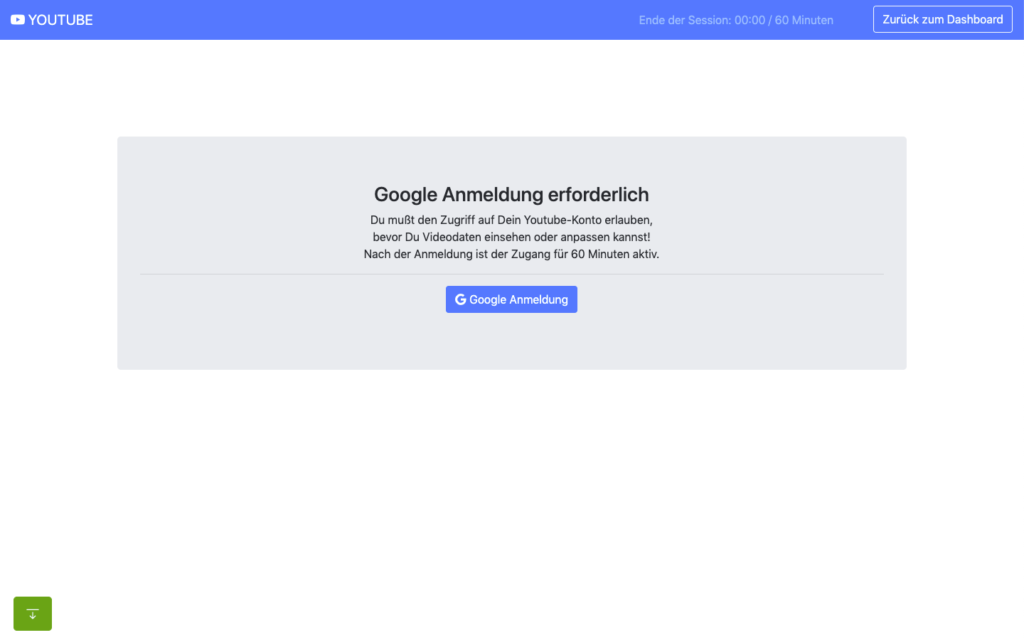

In the past access to the Youtube Data API was far easier and less limited, when it comes to offline / none expiring OAuth2 refresh tokens.

When you are building a server-side application that is only available to your customer or moderators, it makes no sense to run that app through the Google App verification. Your app will never be used in public.

The Youtube Data API and its scopes, are defined as sensitive and therefor require third-party security assessment for public access.

The scopes I am requesting are https://www.googleapis.com/auth/youtube.upload + https://www.googleapis.com/auth/youtube.

Because of that its far easier to just setup OAuth 2 in test mode and restrict access to your customer and specific additional accounts only (up to 100 test users allowed). What all these account need, is access to your own or Brand Youtube Channel.

Preparation in the Google Cloud Console:

A detailed description can be found here.

You can circumvent verification for the consent screen, by using an organisation setup at Google. Here some infos about that. With that setup offline refresh tokens should work fine.

Update: Just tried that, but wont work with a branded youtube account, even though the cloud user has admin access to it. Not giving up yet, but Google / Youtube really makes it difficult to just have a simple offline solution for specific tasks ;) BTW also forced the login hint, to make sure the right account is logged in : $client->setLoginHint(‘YourWoreksapceAccount’); !

You might have heard of the “The League of Extraordinary Packages“. It is a group of developers who have banded together to build solid, well tested PHP packages using modern coding standards.

They also offer an OAuth2-client + OAuth2 Google extension that can be used.

On the server, the Google API PHP SDK can be easily integrated using Composer.

In my customer plugin I neatly separated all relevant areas in classes & traits:

You can check the expiry time of your access token by accessing:

https://www.googleapis.com/oauth2/v1/tokeninfo?access_token=YOUR_TOKEN“A Google Cloud Platform project with an OAuth consent screen configured for an external user type and a publishing status of “Testing” is issued a refresh token expiring in 7 days.” – Google

Basic Auth example from the SDK:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 |

<?php // Call set_include_path() as needed to point to your client library. set_include_path($_SERVER['DOCUMENT_ROOT'] . '/directory/to/google/api/'); require_once 'Google/Client.php'; require_once 'Google/Service/YouTube.php'; session_start(); /* * You can acquire an OAuth 2.0 client ID and client secret from the * {{ Google Cloud Console }} <{{ https://cloud.google.com/console }}> * For more information about using OAuth 2.0 to access Google APIs, please see: * <https://developers.google.com/youtube/v3/guides/authentication> * Please ensure that you have enabled the YouTube Data API for your project. */ $OAUTH2_CLIENT_ID = 'XXXXXXX.apps.googleusercontent.com'; $OAUTH2_CLIENT_SECRET = 'XXXXXXXXXX'; $REDIRECT = 'http://localhost/oauth2callback.php'; $APPNAME = "XXXXXXXXX"; $client = new Google_Client(); $client->setClientId($OAUTH2_CLIENT_ID); $client->setClientSecret($OAUTH2_CLIENT_SECRET); $client->setScopes('https://www.googleapis.com/auth/youtube'); $client->setRedirectUri($REDIRECT); $client->setApplicationName($APPNAME); $client->setAccessType('offline'); // Define an object that will be used to make all API requests. $youtube = new Google_Service_YouTube($client); if (isset($_GET['code'])) { if (strval($_SESSION['state']) !== strval($_GET['state'])) { die('The session state did not match.'); } $client->authenticate($_GET['code']); $_SESSION['token'] = $client->getAccessToken(); } if (isset($_SESSION['token'])) { $client->setAccessToken($_SESSION['token']); echo '<code>' . $_SESSION['token'] . '</code>'; } // Check to ensure that the access token was successfully acquired. if ($client->getAccessToken()) { try { // Call the channels.list method to retrieve information about the // currently authenticated user's channel. $channelsResponse = $youtube->channels->listChannels('contentDetails', array( 'mine' => 'true', )); $htmlBody = ''; foreach ($channelsResponse['items'] as $channel) { // Extract the unique playlist ID that identifies the list of videos // uploaded to the channel, and then call the playlistItems.list method // to retrieve that list. $uploadsListId = $channel['contentDetails']['relatedPlaylists']['uploads']; $playlistItemsResponse = $youtube->playlistItems->listPlaylistItems('snippet', array( 'playlistId' => $uploadsListId, 'maxResults' => 50 )); $htmlBody .= "<h3>Videos in list $uploadsListId</h3><ul>"; foreach ($playlistItemsResponse['items'] as $playlistItem) { $htmlBody .= sprintf('<li>%s (%s)</li>', $playlistItem['snippet']['title'], $playlistItem['snippet']['resourceId']['videoId']); } $htmlBody .= '</ul>'; } } catch (Google_ServiceException $e) { $htmlBody .= sprintf('<p>A service error occurred: <code>%s</code></p>', htmlspecialchars($e->getMessage())); } catch (Google_Exception $e) { $htmlBody .= sprintf('<p>An client error occurred: <code>%s</code></p>', htmlspecialchars($e->getMessage())); } $_SESSION['token'] = $client->getAccessToken(); } else { $state = mt_rand(); $client->setState($state); $_SESSION['state'] = $state; $authUrl = $client->createAuthUrl(); $htmlBody = <<<END <h3>Authorization Required</h3> <p>You need to <a href="$authUrl">authorise access</a> before proceeding.<p> END; } ?> <!doctype html> <html> <head> <title>My Uploads</title> </head> <body> <?php echo $htmlBody?> </body> </html> |

A simple upload example can be found here .

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 |

try{ // REPLACE this value with the video ID of the video being updated. $videoId = "VIDEO_ID"; // Call the API's videos.list method to retrieve the video resource. $listResponse = $youtube->videos->listVideos("snippet", array('id' => $videoId)); // If $listResponse is empty, the specified video was not found. if (empty($listResponse)) { $htmlBody .= sprintf('<h3>Can\'t find a video with video id: %s</h3>', $videoId); } else { // Since the request specified a video ID, the response only // contains one video resource. $video = $listResponse[0]; $videoSnippet = $video['snippet']; $tags = $videoSnippet['tags']; // Preserve any tags already associated with the video. If the video does // not have any tags, create a new list. Replace the values "tag1" and // "tag2" with the new tags you want to associate with the video. if (is_null($tags)) { $tags = array("tag1", "tag2"); } else { array_push($tags, "tag1", "tag2"); } // Set the tags array for the video snippet $videoSnippet['tags'] = $tags; // Update the video resource by calling the videos.update() method. $updateResponse = $youtube->videos->update("snippet", $video); $responseTags = $updateResponse['snippet']['tags']; $htmlBody .= "<h3>Video Updated</h3><ul>"; $htmlBody .= sprintf('<li>Tags "%s" and "%s" added for video %s (%s) </li>', array_pop($responseTags), array_pop($responseTags), $videoId, $video['snippet']['title']); $htmlBody .= '</ul>'; } } catch (Google_Service_Exception $e) { $htmlBody .= sprintf('<p>A service error occurred: <code>%s</code></p>', htmlspecialchars($e->getMessage())); } catch (Google_Exception $e) { $htmlBody .= sprintf('<p>An client error occurred: <code>%s</code></p>', htmlspecialchars($e->getMessage())); } |

All operations to and from the Youtube Data API are rate limited. What is important for us, are the queries per day.

The default quota is 10.000 queries per day, sounds a lot, but is easily gone after updating 150-200 videos. You can request this limit to be raised, but again a lot of paperwork and questions that are just not needed.

The above limit just means, that you need to cache as many queries as possible, to only query live when needed ;)

Something you learn fast, when experimenting with different things! I hit that limit multiple times in the first few days, with around 500 videos in the queue.

Different operation cost you different amount of units

It also helps to use the Google Developer Playground to testdrive the Youtube Data API with your own credentials while optimising your own code.

You can define your own OAuth 2.0 configuration by clicking the cog in the upper right corner.

I setup the bulk updating to allow splitting it over multiple days, if required. For this an offline refresh token is needed, as the standard token expires after 60 minutes.

My customer can also just update a single video, when changes are applied to the product or a new product has been added.

If more frequent updates are required, I will ask for a raise of the queries per day. You can circumvent the limit by using multiple Google Cloud Platform accounts with new OAuth credentials, but really an overkill right now. I have done that in the past ;)

The GUI is just based of Bootstrap, to make it simple and clean. Using my own wrapper to make it work within the WordPress admin.

For all ajax operations, I am using htmx and _hyperscript, which I will talk about in another article in the future.

Really neat and clean way to build single page interfaces.

The whole plugin runs of its own REST API endpoint. Just love using WordPress as a headless system.

I used TWIG / Timber for the templates, to separate logic and layout. Timber has been my goto solution for years now. It drives my own and many customer websites.

This has been a lot of fun, maybe a bit too much LOL

I do geek-out about many of my projects, but this experience helped me to bring my WordPress toolbox to the next level. This will help to drive other things in the future.

Working so deeply with the Youtube Data API has been fun and feels so easy now, after all remaining problems have been solved.

Would have loved this during my portalZINE TV days ;)

I you read all this, you just earned yourself a badge for completion ;)

Need something similar or something else? Just say hi and we can talk.

The first contact is always critical in order to define a possible project or future collaboration. I always have some time during the week to talk to potential customers about possible solutions.

In a casual first conversation, you can clarify what both sides need.

Either the chemistry is right or not :)

It doesn’t have to be at all!

There are approaches and solutions for every project size. Most projects can be split into phases to keep everything manageable and affordable.

My projects are always tailored personally and predefined as precisely as possible.

If you don’t ask, you end up paying more everywhere!

I have been looking after private individuals, startups, medium-sized companies and larger companies for years.

As a full stack developer, I can provide my customers with a complete project overview and help classify the effort. I also have design and advertising experience, as well as an above-average basic commercial and legal knowledge.

My customers know that I have no problem sharing my knowledge and can provide assistance in all areas.

Again, just talk to me and lets find a common ground :)

Until about 5 years ago, my main focus was on international projects. But in the last few years I have also built a solid foothold in the German-speaking arena.

I have implemented projects with up to 15 languages. For me, English sometimes runs better than German, which is also reflected in my portfolio and my BIO . I can write, read and speak French well (even if it’s a bit rusty!).

If you want to set up a multilingual site or translate parts of it, you’ve come to the right place. Of course also for WordPress via WPML .

Again, just say hello or Moin!

For me everything is actionable and can be worked out together depending on the project. Nobody needs to pay more than is really absolutely necessary. Sustainability is also a big topic and flows into all my projects.

Over the years I have also developed many of my own internal solutions, in order to be able to implement special solutions quickly.

Every project puzzle can be solved!

It does not work without those and cannot be disregarded in any development phase of a project. I have a lot of experience in that area and can also contribute ideas and solutions where needed.

There are certainly still a few things that could be listed here, but I would rather clarify this in a personal conversation.

Gladly by phone or live via Skype!

Just ask for an appointment!

I look forward to hearing from you. Thanks for your interest!

Greetings

VR is a new passion of mine, that I play with in my freetime, but also explore as a developer and tech enthusiast.

As video quality has evolved a lot in the past 2 years, the big topic now is full body immersion.

The following things are becoming more important:

I will use this article to collect things that are already available, diy projects, experiments and things that are in their early stages.

Hand & finger tracking is already making its way into consumer products. It is still not widely integrated, but has made big jumps the past year.

Eye tracking is not only important to make avatars more life-like, but also to track your eye focus and help to reduce processor load.

Lip tracking has made a big jump, with the new Vive Lip tracker and is important for social interactions.

Body tracking is one of the areas, that has so many projects attached to it. There are so many neat solutions out there, that almost anyone can use it by now.

Free locomotion is one of the biggest challenges in VR right now. You can increase your playarea, but that is still limiting and requires space. Their are VR treadmills, but none of them really reproduces life-like natural movement yet. And those solutions that come close, are still out of your reach. Most of the tracking above is somehow covered and will be available soon, while real locomotion is really the hardest to solve of them all.

Always crucial to have the right look for yourself in VR :)

I am always looking for easy ways to white label the WordPress administration for myself and my clients. A nice personal touch for each project and an easy way to declutter the interface.

These are my personal favorites, that I use on a regular basis.

There are a lot of solutions out there, but many break easily and are really heavy to load. Some of these solutions I tried also break easily on new WordPress Upgrades. The first two below are currently my favorites.

When sharing the administration with your customer, you often need to make it as simple a possible for them. Depending on your setup, the menu becomes cluttered and overwhelming really fast.

I often trim menus for each user role, to make only those options accessible that are really needed.

When sharing the administration with multiple users, its always nice to add some personality to the user profiles as well.

WP User Profiles

“WP User Profiles is a sophisticated way to edit users in WordPress.”

The plugin provides other small addons, like WP User Avatars. Neat plugin to tweak admins, editors and other users.

Enjoy

Alex

Everybody seems to be searching for ways to integrate digital communication into their home-office environments or client/customer workflows. But many are not willing to pay huge monthly fees or rely on services like Skype, Zoom, Microsoft Teams or Slack.

For smaller teams meetings, or webinars for people up to 6, their is an alternative! You can easily use Nextcloud and its integrated Talk application for chat & webrtc video/audio streaming capabilities. Combined with the calendar app this becomes a very effective and low-cost solution.

Nextcloud Talk is a fully self hosted audio/video and chat communication service. It features web and mobile apps and is designed to offer the highest degree of security while being easy to use.

Talk makes it easy to call customers and partners in one-to-one or group-scenarios. Users can invite external chat participants with an URL into public rooms. The chat enables participants to easily exchange messages, links and notes.

Share the content of a single window or a full desktop screen for presentations with chat-partners. Manage participants by inviting, muting or removing them. Schedule meetings and be notified when they start. A lobby is provided for guests to wait until the call starts.

Calls are end-to-end encrypted so no communication can be intercepted. Chat logs are stored securely on your own server.

The peer to peer nature of Talk does inflate network traffic, creating one incoming and sending stream per other participant. This places practical limitations on calls that depend on network capabilities.

A typical private Nextcloud Talk setup should handle dozens of calls with each up to 4-6 participants, more if all participants have a good network connection.

Nextcloud is part of many hosting offers and can often be installed with one single click. You will also find hosting providers, that are specialised on Nextcloud hosting and offer you a preinstalled setup to use.

But any decent hosting setup should be enough to roll out your own. Setting up Nextcloud is really easy, as it offers a simple guided installation process.

If you need help setting up your own private instance, get in touch and we can work something out. Depending on your server setup and apps you want, it should not take longer than 60-90 minutes to get it up and running.

Alex, Contact Me

Nextcloud Talk tries to establish a direct peer-to-peer (P2P) connection, thus on connections beyond the local network (behind a NAT or router), clients do not only need to know each others public IP, but the participants local IPs as well. Processing this, is the job of a STUN server. As there is one preconfigured for Nextcloud Talk, still nothing else needs to be done.

But in many cases, e.g. in combination with firewalls or symmetric NAT, a STUN server will not work as well, and then a so called TURN server is required. Now no direct P2P connection is established, but all traffic is relayed through the TURN server, thus additional (at least internal) traffic and resources are used.

Nextcloud Talk will try direct P2P in the first place, use STUN if needed and TURN as last resort fallback. Thus to be most flexible and guarantee functionality of your Nextcloud Talk instance in all possible connection cases, you most properly want to setup a TURN server. COTurn is an open source solution, that offers just that.

If you need to setup your own TURN Server I can help. There are pretty cost effective ways, to host your own.

Alex, Contact Me