I had the chance this year to meetup with my client Thomas Dowson from “Archaeology Travel Media” at the Travel Innovation Summit in Seville.

Over the past 2 years we have been revamping all the content from archaeology-travel.com and integrated a sophisticated travel itinerary builder system into the mix. We are almost feature complete and are currently fine-tuning the system. New explorers are welcome to signup and testdrive our set of unique features.

It was so nice to finally meet the whole team in person and celebrate what we have accomplished together so far.

Directly taken from the front-page :)

“EXPLORE THE WORLD’S PASTS WITH ARCHAEOLOGY TRAVEL GUIDES, CRAFTED BY EXPERIENCED ARCHAEOLOGISTS & HISTORIANS

Whatever your preferred style of travel, budget or luxury, backpacker or hand luggage only, slow or adventure, if you are interested in archaeology, history and art this is an online travel guide just for you.

Here you will find ideas for where to go, what sites, monuments, museums and art galleries to see, as well as information and tips on how to get there and what tickets to buy.

Our destination and thematic guides are designed to assist you to find and/or create adventures in archaeology and history that suit you, be it a bucket list trip or visiting a hidden gem nearby.”

More Details

About

Mission & Vision

Code of Ethics

We are constantly expanding our set of curated destinations, locations and POIs. Our plan is it, to make it even easier to find unique places for your next travel experience.

We are also working on partnerships to enhance travel options and offer a even broader variety of additional content.

Looking forward to all the things to come, as well as to the continued exceptional collaboration between all team members.

First a bit of context :)

Translation within WordPress is based of Gettext. Gettext is a software internationalization and localization (i18n) framework used in many programming languages to facilitate the translation of software applications into different languages. It provides a set of tools and libraries for managing multilingual strings and translating them into various languages.

The primary goal of Gettext is to separate the text displayed in an application from the code that generates it. It allows developers to mark strings in their code that need to be translated and provides mechanisms for extracting those strings into a separate file known as a “message catalog” or “translation template.”

The translation template file contains the original strings and serves as a basis for translators to provide translations for different languages. Translators use specialized tools, such as poedit or Lokalize, to work with the message catalog files. These tools help them associate translated strings with their corresponding original strings, making the translation process more manageable.

At runtime, Gettext libraries are used to retrieve the appropriate translated strings based on the user’s language preferences and the available translations in the message catalog. It allows applications to display the user interface, messages, and other text elements in the language preferred by the user.

Gettext is widely used in various programming languages, including C, C++, Python, Ruby, PHP, and many others. It has become a de facto standard for internationalization and localization in the software development community due to its flexibility and extensive support in different programming environments.

WordPress uses dedicated language files to store translations of your strings of text in other languages. Two formats are used:

There has been a discussion for years, if it makes sense to cache mo-files, to speedup WordPress when multiple languages are in use. Discussion of the WordPress Core team.

There have been a couple of plugins trying to fix this and prevent reloading of language files on every pageload.

Most of these use transients in your database and object caches if active.

Well it all depends on the amount of language files and your infrastructure. This load/parse operation is quite CPU-intensive and does spend quite a significant amount of time.

I have decided, not to bother with it in the past :) But I am always looking for ways to speed up multi-lingual websites, as that is my daily bread & butter ;) Checkout Index WP MySQL For Speed & Index WP Users For Speed, which optimizes the MySQL Indexes for optimal speed. That change, really does make a bit difference!

One thing that can help to speed up things, is to use the native gettext extension and not the WordPress integration of it. This will indeed help speedup translation processing and help big multilingual websites.

Native Gettext for WordPress by Colin Leroy-Mira, provides just that.

Create a must use plugin and add this:

|

1 2 3 |

<?php /** Plugin Name: Disable Gettext */ add_filter('override_load_textdomain','__return_true'); |

This will prevent any .mo file from loading.

Please consider the performance impact. Read about it at the main documentation.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 |

<?php /** * Change text strings * * @link http://codex.wordpress.org/Plugin_API/Filter_Reference/gettext */ function my_text_strings( $translated_text, $text, $domain ) { switch ( $translated_text ) { case 'Sale!' : $translated_text = __( 'Clearance!', 'woocommerce' ); break; case 'Add to cart' : $translated_text = __( 'Add to basket', 'woocommerce' ); break; case 'Related Products' : $translated_text = __( 'Check out these related products', 'woocommerce' ); break; } return $translated_text; } add_filter( 'gettext', 'my_text_strings', 20, 3 ); |

Enjoy ….

Extending iPanorama 360 can be a challenge, as none of the events are documented. You can talk to the developer or ask for support to get things done.

You can also read up on the core assets used:

ipanorama.min.js

jquery.ipanorama.min.js.

You can easily search for the events and figure out the parameters passed. Here a quick reference for things I used so far.

|

1 2 3 4 5 |

ipanorama:ready ipanorama:config ipanorama:fullscreen ipanorama:mode ipanorama:transform-mode-changed |

|

1 2 |

ipanorama:tooltip-show ipanorama:tooltip-hide |

|

1 2 |

ipanorama:popover-show ipanorama:popover-hide |

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 |

ipanorama:scene-before-load ipanorama:scene-after-load ipanorama:scene-progress ipanorama:scene-error ipanorama:scene-show-start ipanorama:scene-show-complete ipanorama:scene-hide-start ipanorama:scene-hide-complete ipanorama:scene-clear ipanorama:scene-index ipanorama:scene-point-add ipanorama:scene-point-remove ipanorama:scene-point-dragstart ipanorama:scene-point-dragend ipanorama:scene-point-select ipanorama:scene-point-deselect ipanorama:scene-point-hide ipanorama:scene-point-show ipanorama:scene-point-change ipanorama:scene-plane-add ipanorama:scene-plane-remove ipanorama:scene-plane-dragstart ipanorama:scene-plane-dragend ipanorama:scene-plane-select ipanorama:scene-plane-deselect ipanorama:scene-camera-start ipanorama:scene-camera-end ipanorama:scene-camera-change |

|

1 2 3 4 5 6 7 |

var instance = this, $ = jQuery; instance.$container.on("ipanorama:scene-after-load", function(e, data) { data.scene.control.minPolarAngle = 90 * Math.PI / 180; data.scene.control.maxPolarAngle = 90 * Math.PI / 180; }); |

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 |

var instance = this, $ = jQuery; function onMarkerClick(e) { var userData = this.cfg.userData; if(userData) { var $iframe = $(userData); $.featherlight($iframe); } } for(var i=0;i<instance.markers.length;i++) { var marker = instance.markers[i]; marker.$marker.on('click', $.proxy(onMarkerClick, marker)); } |

|

1 2 3 4 5 6 7 8 9 10 |

const plugin = this; const $ = jQuery; const $loading = $('<div>').addClass('myloading').text('loading'); plugin.$container.append($loading); plugin.$container.on('ipanorama:scene-progress', (e, data) => { const flag = data.progress.loaded == data.progress.total; $loading.toggleClass('active', !flag); }); |

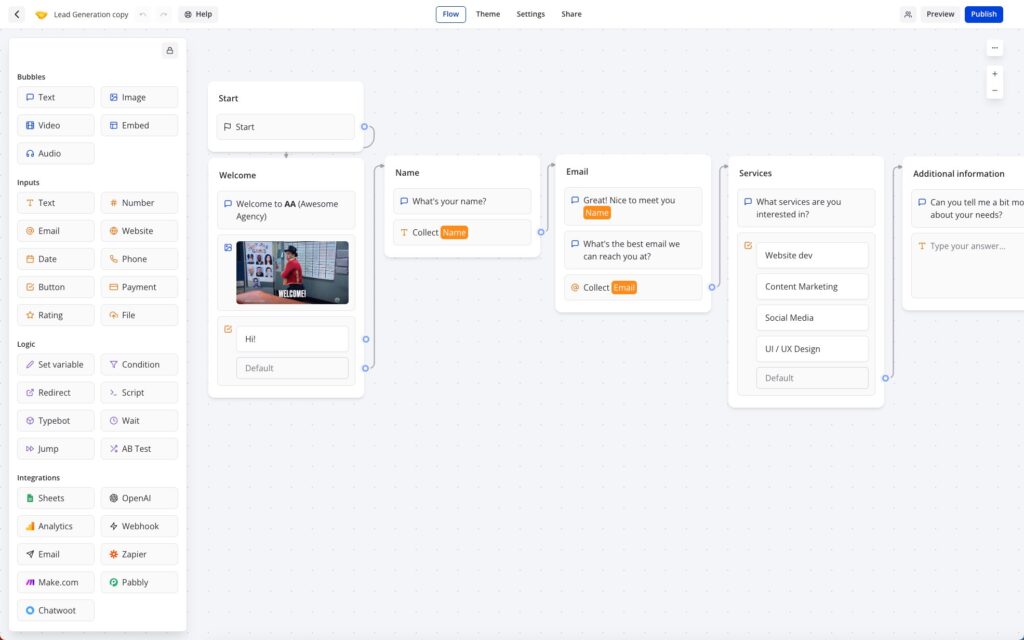

Typebot is a free and open-source platform that lets you create conversational apps and forms, which can be embedded anywhere on your website or mobile apps.

It provides various features like text, image, and video bubble messages, input fields for various data types, native integrations, conditional branching, URL redirections, beautiful animations, and customizable themes.

You can embed it as a container, popup, or chat bubble with the native JS library and get in-depth analytics of the results in real-time.

Typebot can be used in the Cloud (free tier available) or installed via Docker.

It drag & drop builder makes it amazingly fast to build your personal chatbot. It provides a ton of integrations (Google Analytics, Google Sheets, OpenAI, Zapier, Webhooks, Email, Chatwood) out of the box or you can connect other things via your own API Requests.

I have been a longtime user of RocketChat and build my own chatbots using Hubot or BotPress.

RocketChat

“Rocket.Chat is a free and open-source team chat platform that enables real-time communication between team members. It provides team messaging, audio and video conferencing, screen sharing, file sharing, and other collaboration features. It offers a familiar chat interface that makes it easy for teams to stay connected and collaborate effectively.

Rocket.Chat is highly customizable and extensible, with a large number of community-contributed plugins and integrations. It supports multiple platforms, including web, desktop, and mobile, making it accessible to teams regardless of their device or location. It also offers end-to-end encryption for secure communication, and compliance with various industry regulations such as HIPAA, GDPR, and others.”

Hubot

“Hubot is a popular open-source chatbot framework developed by GitHub. It allows developers to build and deploy custom chatbots that can automate tasks, integrate with various APIs, and enhance team communication and collaboration.

Hubot is built on Node.js and can be extended with various plugins and scripts, making it highly customizable and flexible. It comes with many built-in plugins, including integration with popular chat platforms like Slack, HipChat, and Campfire, as well as various APIs like Twitter, GitHub, and Google.

Hubot enables developers to build chatbots that can perform various tasks, such as scheduling meetings, deploying code, fetching data, and responding to user queries. It also provides a command-line interface for managing and interacting with the chatbot.”

Botpress

“Botpress is an open-source platform that allows you to build and deploy chatbots and conversational interfaces. It provides a user-friendly interface and a visual flow editor that allows you to create and manage your chatbot’s conversation flow. Botpress comes with many pre-built components and integrations, including natural language processing (NLP) engines, chat widgets, and webhooks, making it easy to build complex chatbots with advanced functionality.

Botpress offers features like machine learning-based NLP, conversational analytics, multi-language support, customizable themes, and a modular architecture that allows you to add custom functionalities with ease. It supports multiple channels, including web, Facebook Messenger, Slack, and WhatsApp, allowing you to reach your audience wherever they are.”

Typebot beats Botpress and could be the reason why I might be skipping RocketChat as well. Not sure I want to build an integration for RocketChat myself.

Base YAML Docker-Compose File to get you started. This will setup a Postgres instance, the typebot builder and typebot viewer.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 |

version: '3.3' services: typebot-db: image: postgres:13 restart: always volumes: - db_data:/var/lib/postgresql/data environment: - POSTGRES_DB=typebot - POSTGRES_PASSWORD=typebot typebot-builder: image: baptistearno/typebot-builder:latest restart: always depends_on: - typebot-db ports: - '8080:3000' extra_hosts: - 'host.docker.internal:host-gateway' # See https://docs.typebot.io/self-hosting/configuration for more configuration options environment: - DATABASE_URL=postgresql://postgres:typebot@typebot-db:5432/typebot - NEXTAUTH_URL=<your-builder-url> - NEXT_PUBLIC_VIEWER_URL=<your-viewer-url> - ENCRYPTION_SECRET=<your-encryption-secret> - ADMIN_EMAIL=<your-admin-email> typebot-viewer: image: baptistearno/typebot-viewer:latest restart: always ports: - '8081:3000' # See https://docs.typebot.io/self-hosting/configuration for more configuration options environment: - DATABASE_URL=postgresql://postgres:typebot@typebot-db:5432/typebot - NEXT_PUBLIC_VIEWER_URL=<your-viewer-url> - ENCRYPTION_SECRET=<your-encryption-secret> volumes: db_data: |

I am a huge Docker fan and run my own home and cloud server with it.

“Docker is a platform that allows developers to create, deploy, and run applications in containers. Containers are lightweight, portable, and self-sufficient environments that can run an application and all its dependencies, making it easier to manage and deploy applications across different environments. Docker provides tools and services for building, shipping, and running containers, as well as a registry for storing and sharing container images.

With Docker, developers can package their applications as containers and deploy them anywhere, whether it’s on a laptop, a server, or in the cloud. Docker has become a popular technology for DevOps teams and has revolutionized the way applications are developed and deployed.”

I am always looking for new ways to document the tools I use. This might help others to find interesting projects to enhance their own work or hobby life :)

I will have multiple series of this kind. I am starting with Docker this week, as it is at the core / a hub for many things I do. I often testdrive things locally, before deploying them to the cloud.

I am not concentrating on the installation of Docker itself, there are so many articles about that out there. You will have no problem to find help articles or videos detailing it for your platform.

Docker Compose and Docker CLI (Command Line Interface) are two different tools provided by Docker, although they are often used together.

Docker CLI is a command-line interface tool that allows users to interact with Docker and manage Docker containers, images, and networks from the terminal. It provides a set of commands that can be used to create, start, stop, and manage Docker containers, as well as to build and push Docker images.

Docker Compose, on the other hand, is a tool for defining and running multi-container Docker applications. It allows users to define a set of services and their dependencies in a YAML file and then start and stop the entire application with a single command. Docker Compose also provides a way to manage the lifecycle of the containers as a group, including scaling up and down the number of containers.

I prefer the use of Docker Compose, as it makes it easy to replicate and tweak a setup between different servers.

There are tools like $composerize, which allow you to easily transform a CLI command into a composer file. Also a nice way to easily combine multiple commands into a clean configuration.

Portainer is an open-source container management tool that provides a web-based user interface for managing Docker environments. With Portainer, users can easily deploy and manage containers, images, networks, and volumes using a graphical user interface (GUI) instead of using the Docker CLI. Portainer also provides features for monitoring container and system metrics, creating and managing container templates, and configuring and managing Docker Swarm clusters.

Portainer is designed to be easy to use and to provide a simple and intuitive interface for managing Docker environments. It supports multiple Docker hosts and allows users to switch between them easily from the GUI. Portainer also provides role-based access control (RBAC) to manage user access and permissions, making it suitable for use in team environments.

Portainer can be installed as a Docker container and can be used to manage both local and remote Docker environments. It is available in two versions: Portainer CE (Community Edition) and Portainer Business. Portainer CE is free and open-source, while Portainer Business provides additional features and support for enterprise users.

Portainer is my tool of choice, as it allows to create stacks. A stack is a collection of Docker services that are deployed and managed as a single entity. A stack is defined in a Compose file (in YAML format) that specifies the services and their configurations.

When a stack is deployed, Portainer creates the required containers, networks, and volumes and starts the services in the stack. Portainer also monitors the stack and its services, providing status updates and alerts in case of issues or failures.

As I said, its important for me to easily transfer a single container or stack to another server. The stack itself can be easily copied and reused. But do we easily export the setup of a current single docker file into a docker-compose file?

docker-autocompose to the rescue! This docker image allows you to generate a docker-compose yaml definition from a docker container.

|

1 |

docker pull ghcr.io/red5d/docker-autocompose:latest |

Export single or multiple containers

|

1 |

docker run --rm -v /var/run/docker.sock:/var/run/docker.sock ghcr.io/red5d/docker-autocompose <container-name-or-id> <additional-names-or-ids>... |

Export all containers

|

1 |

docker run --rm -v /var/run/docker.sock:/var/run/docker.sock ghcr.io/red5d/docker-autocompose $(docker ps -aq) |

This has been a great tool to also quickly backup all relevant container information. Apart from the persistent data, the most important information to quickly restore a setup if needed.

Backup , backup … backup! Learned my lesson, when it comes to restoring docker setups ;) Its so easy to forget little tweaks you did to the setup of a docker container.

Starting tomorrow …

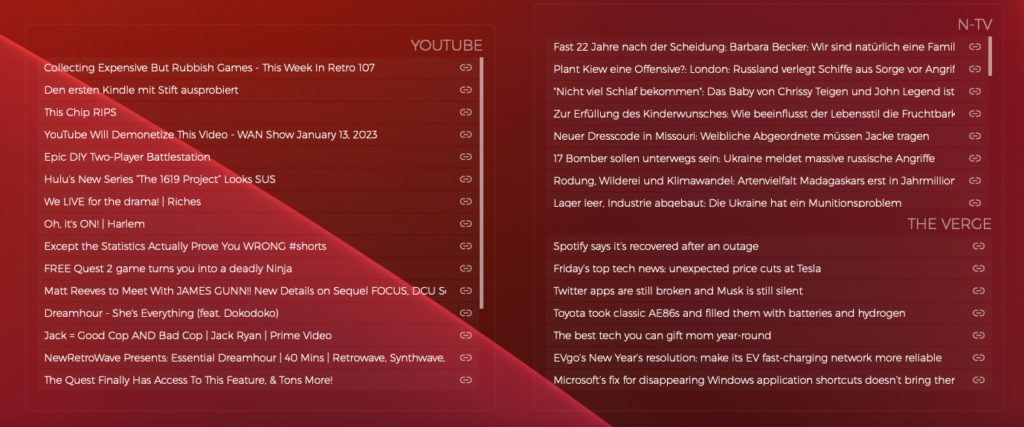

“Übersicht” is a German word that means “overview” or “summary” in English. The name of the software was chosen because it allows users to have a quick overview of various information on their desktop. It’s also a play on words, because in German the name of the software can be translated as “super sight” which refers to the ability to have a lot of information at a glance.

Übersicht is a lightweight and powerful application that allows users to create and customize desktop widgets using web technologies such as HTML, CSS, and JavaScript. With Übersicht, you can create widgets that display information such as the weather, calendar events, system resource usage, and more. The widgets can be customized to display the information in any format and can be positioned anywhere on the desktop, allowing you to create a personalized and efficient workspace.

Übersicht widgets are created using a simple and user-friendly interface that allows you to preview and edit the widget’s code. The widgets are also highly customizable, allowing you to change the appearance and behavior of the widgets to suit your needs. Additionally, Übersicht widgets can be shared with other users, and there are a variety of widgets available for download from the developer’s website.

The application is open-source and free to use, and it’s also lightweight, it won’t affect your computer performance. Overall, Übersicht is a powerful and versatile tool for creating custom desktop widgets on macOS.

There are several other tools similar to Übersicht that allow you to create custom desktop widgets. Some of the most popular alternatives include:

With many external systems in play, its always crucial to keep an eye on things. I have build nice dashboards, using Grafana, InfluxDB, NetData and Prometheus. But they are either displayed in a browser window or on a separate screen.

If you have 2 / 3 monitors or an ultra-wide screen, you have a lot of Desktop real-estate you can use.

That is something that I have been looking at for years, but only tried for a short period of time. This year I want to really build out desktop and menu widgets , that help me to get an even better overview of things and help reduce repetitive tasks.

I will share links to things I like and share access to the widgets I build myself or tweaked.

These are the current things that are in progress or planned.

Nothing to share yet ….

“Git is a DevOps tool used for source code management. It is a free and open-source version control system used to handle small to very large projects efficiently. Git is used to tracking changes in the source code, enabling multiple developers to work together on non-linear development.”

Many use Github to host remote Git repositories, but you can use other hosts like: BitBucket, Gitlab, Google Cloud Source Repositories …. You can also host your own repository on your local NAS or remote server.

Will use this article for common problems I encoutered and possible solutions.

Push failed in Github Desktop and using Push from the command-line. The best bet is to experiment with different git config options.

The core.compression 4 worked for me and the push went through nicely.

|

1 2 3 4 5 |

git config --global core.compression 0 git config --global core.compression 1 git config --global core.compression 2 git config --global core.compression 3 git config --global core.compression 4 |

Other config option that can be tweaked are

|

1 2 3 |

git config --global http.postBuffer git config --global https.postBuffer git config --global ssh.postBuffer |

Read more about them here

jQuery has provided easy access to complicated core Javascript solutions in the past and has been shielding us from difficult workarounds for legacy browsers. But times have changed and many of those things can be done as easily using Javascript directly.

jQuery is a fast, small, and feature-rich JavaScript library. It makes interactions with HTML documents easy, and is widely used in web development to add features to web pages and to simplify the process of writing JavaScript. With a combination of versatility and extensibility, jQuery has changed the way that millions of people write JavaScript.

That being said, jQuery is still a popular and widely used library, and there are many valid reasons to continue using it. It is ultimately up to you to decide whether the benefits of using jQuery outweigh the potential drawbacks in your particular situation.

Easily search and compare direct Javascript solutions to jQuery ….

How jQuery does it:

|

1 |

$.getJSON('/my/url', function (data) {}); |

Pure Javascript:

|

1 2 |

const response = await fetch('/my/url'); const data = await response.json(); |

UmbrellaJS is a lightweight JavaScript library that provides a number of utility functions and features for working with DOM elements and handling events. It was designed to be small, fast, and easy to use, and it does not have any dependencies on other libraries.

Some of the features provided by UmbrellaJS include:

UmbrellaJS is a good choice for developers who want a simple, lightweight library for working with DOM elements and handling events. It is especially well-suited for smaller projects or for developers who want to avoid the overhead of larger libraries like jQuery.

Selector demos:

|

1 2 3 4 5 6 7 |

u('ul#demo li') u(document.getElementById('demo')) u(document.getElementsByClassName('demo')) u([ document.getElementById('demo') ]) u( u('ul li') ) u('<a>') u('li', context) |

Documentation

Migrate from jQuery

HTMX (HTML enhanced for asynchronous communication and XML) is a JavaScript library that allows you to add asynchronous communication and other interactive features to your web pages using HTML attributes and elements. It allows you to make AJAX (Asynchronous JavaScript and XML) requests and handle responses directly in your HTML, without the need for writing any JavaScript code.

HTMX works by intercepting events on HTML elements and making asynchronous requests based on the attributes you specify. For example, you can use the hx-get attribute to make a GET request to a specified URL, and use the hx-trigger attribute to specify an event that should trigger the request. You can also use the hx-target attribute to specify an element on the page where the response should be inserted.

Here’s an example of how you might use HTMX to make a GET request and insert the response into a div element:

|

1 2 3 |

<button hx-get="/some/url" hx-target="#target">Load Data</button> <div id="target"></div> |

When the button is clicked, HTMX will make a GET request to /some/url and insert the response into the div element with the id of target.

HTMX is designed to be easy to use and flexible, and it can be used to add a wide range of interactive features to your web pages.

I am currently using HTMX in one of my longterm projects and will be talking about it more in a separate article in the future !

HTMX Reference / Documentation

It is ultimately up to you to decide which approach is best for your particular project. Sometimes a combination is needed ;)

Facebook sucks …. and now how to solve saving pages connected to a gray account! Might not work for everyone, but it solved it for me.

“A gray account is an account used to log into Facebook that is not associated with a personal profile or account. People used to be able to manage their Pages with gray accounts before we required individuals to have a personal Profile in order to create, manage, or run ads on a Page.” – Facebook Help

Normally it should be easy to transfer a page to a new administration account. Right? RIGHT!

My customers page was on the new profile page layout, that was introduced a while back. So normally you should go to the Page -> Professional Dashboard -> Settings -> Site Access. This would than allow you to assign a new page admin.

This just straight fails for me! I can easily choose a new person , select person and allow full access, confirm with password and than nothing happens. When checking the console, i see a couple of random errors …

Tried with different accounts, different browsers, different OS. Always the same …

After trying everything and almost giving up. I though, well you can still switch back to the old page layout, maybe that works!

And that is what finally worked for me. I was able to assign my customer as a new admin, within Settings -> Site Roles and than switch back to the new page layout!

Again … Facebook sucks! Who is testdriving updates and checking for incoming errors … seems that noone cares. Just leave it to the user, to solve their own problems. Not a single resource, that actually helps. I am sure that there are many, that already lost their pages! Just unbelievable !!!

Happy coding!

Papa Parse is a powerful, in-browser CSV parser for the big boys and girls :)

If you do need easy CSV parsing and conversion back to CSV, take a look at it!

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 |

// Parse CSV string var data = Papa.parse(csv); // Convert back to CSV var csv = Papa.unparse(data); // Parse local CSV file Papa.parse(file, { complete: function(results) { console.log("Finished:", results.data); } }); // Stream big file in worker thread Papa.parse(bigFile, { worker: true, step: function(results) { console.log("Row:", results.data); } }); |

|

1 |

<input type="file" name="File Upload" id="txtFileUpload" accept=".csv" /> |

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 |

document.getElementById('txtFileUpload').addEventListener('change', upload, false); function upload(evt) { var data = null; var file = evt.target.files[0]; var reader = new FileReader(); reader.readAsText(file); reader.onload = function(event) { var csvData = event.target.result; var data = Papa.parse(csvData, {header : true}); console.log(data); }; reader.onerror = function() { alert('Unable to read ' + file.fileName); }; } |

Papa Parse is the fastest in-browser CSV (or delimited text) parser for JavaScript. It is reliable and correct according to RFC 4180, and it comes with these features:

<input type="file"> elementsI am currently using it to quickly parse Adobe Audition CSV marker files and prepare them for storage for my podcast.

|

1 2 3 4 5 6 |

Name Start Duration Time Format Type Description Ankündigungen 0:20.432 0:00.000 decimal Cue Stargate Table Read 1:12.811 0:00.000 decimal Cue Dune Part One 2021 1:58.677 0:00.000 decimal Cue Dust - Cosmo 2:20.925 0:00.000 decimal Cue Nina - Beyond Memory 2:51.276 0:00.000 decimal Cue |

|

1 2 3 4 5 6 |

Papa.unparse(tabData, { delimiter: "\tab", header: true, newline: "\r\n", skipEmptyLines: true }) |